Abstract

The aim of multicriteria decision aiding is to give the decision maker a recommendation concerning a set of objects evaluated from multiple points of view called criteria. Since a rational decision maker acts with respect to his/her value system, in order to recommend the most-preferred decision, one must identify decision maker's preferences. In this paper, we focus on preference discovery from data concerning some past decisions of the decision maker. We consider the preference model in the form of a set of "if..., then..." decision rules discovered from the data by inductive learning. To structure the data prior to induction of rules, we use the Dominance-based Rough Set Approach (DRSA). DRSA is a methodology for reasoning about data, which handles ordinal evaluations of objects on considered criteria and monotonic relationships between these evaluations and the decision. We review applications of DRSA to a large variety of multicriteria decision problems.

multicriteria decision aiding; ordinal classification; choice; ranking; Dominance-based Rough Set Approach; preference modeling; decision rules

Rough set and rule-based multicriteria decision aiding* * Invited paper

Roman SlowinskiI, ** ** Corresponding author ; Salvatore GrecoII; Benedetto MatarazzoII

IInstitute of Computing Science, Poznan University of Technology, 60-965 Poznan, and Systems Research Institute, Polish Academy of Sciences, 01-447 Warsaw, Poland. E-mail: Roman.Slowinski@cs.put.poznan.pl

IIDepartment of Economics and Business, University of Catania, Corso Italia, 55, 95129 Catania, Italy. E-mails: salgreco@unict.it, matarazz@unict.it

ABSTRACT

The aim of multicriteria decision aiding is to give the decision maker a recommendation concerning a set of objects evaluated from multiple points of view called criteria. Since a rational decision maker acts with respect to his/her value system, in order to recommend the most-preferred decision, one must identify decision maker's preferences. In this paper, we focus on preference discovery from data concerning some past decisions of the decision maker. We consider the preference model in the form of a set of "if..., then..." decision rules discovered from the data by inductive learning. To structure the data prior to induction of rules, we use the Dominance-based Rough Set Approach (DRSA). DRSA is a methodology for reasoning about data, which handles ordinal evaluations of objects on considered criteria and monotonic relationships between these evaluations and the decision. We review applications of DRSA to a large variety of multicriteria decision problems.

Keywords: multicriteria decision aiding, ordinal classification, choice, ranking, Dominance-based Rough Set Approach, preference modeling, decision rules.

1 INTRODUCTION

In this paper, we review a multicriteria decision aiding methodology which employs decision maker's (DM's) preference model in form of a set of decision rules discovered from some preference data. Multicriteria decision problems concern a finite set of objects (also called alternatives, actions, acts, solutions, etc.) evaluated by a finite set of criteria (also called attributes, features, variables, etc.), and raise one of the following questions: (i) how to assign the objects to some ordered classes (ordinal classification), (ii) how to choose the best subset of objects (choice or optimization), or (iii) how to rank the objects from the best to the worst (ranking). The answer to everyone of these questions involves an aggregation of the multicriteria evaluations of objects, which takes into account preferences of the DM. In consequence, the aggregation formula is at the same time the DM's preference model. Thus, any recommendation referring to one of the above questions must be based on the DM's preference model. The preference data used for building this model, as well as the way of building and using it in the decision process, are main factors distinguishing various multicriteria decision aiding methodologies.

In our case, we assume that the preference data includes either observations of DM's past decisions in the same decision problem, or examples of decisions consciously elicited by the DM on demand of an analyst. This way of preference data elicitation is called indirect, by opposition to direct elicitation when the DM is supposed to provide information leading directly to definition of all preference model parameters, like weights and discrimination thresholds of criteria, trade-off rates, etc. (see, e.g., Roy, 1996).

Past decisions or decision examples may be, however, inconsistent with the dominance principle commonly accepted for multicriteria decision problems. Decisions are inconsistent with the dominance principle if:

-

in case of ordinal classification: object

a has been assigned to a worse decision class than object

b, although

a is at least as good as

b on all the considered criteria,

i.e. a dominates

b;

-

in case of choice and ranking: pair of objects (

a,

b) has been assigned a degree of preference worse than pair (

c,

d), although differences of evaluations between

a and

b on all the considered criteria is at least as good as respective differences of evaluations between

c and

d,

i.e. pair (

a,

b) dominates pair (

c,

d)s.

Thus, in order to build a preference model from partly inconsistent preference data, we had an idea to structure this data using the concept of a rough set introduced by Pawlak (1982, 1991). Since its conception, rough set theory has often proved to be an excellent mathematical tool for the analysis of inconsistent description of objects. Originally, its understanding of inconsistency was different, however, than the above inconsistency with the dominance principle. The original rough set philosophy is based on the assumption that with every object of the universe U there is associated a certain amount of information (data, knowledge). This information can be expressed by means of a number of attributes. The attributes describe the objects. Objects which have the same description are said to be indiscernible (or similar) with respect to the available information. The indiscernibility relation thus generated constitutes the mathematical basis of rough set theory. It induces a partition of the universe into blocks of indiscernible objects, called elementary sets, which can be used to build knowledge about a real or abstract world. The use of the indiscernibility relation results in information granulation.

Any subset X of the universe may be expressed in terms of these blocks either precisely (as a union of elementary sets) or approximately. In the latter case, the subset X may be characterized by two ordinary sets, called the lower and upper approximations. A rough set is defined by means of these two approximations, which coincide in the case of an ordinary set. The lower approximation of X is composed of all the elementary sets included in X (whose elements, therefore, certainly belong to X), while the upper approximation of X consists of all the elementary sets which have a non-empty intersection with X (whose elements, therefore, may belong to X). The difference between the upper and lower approximation constitutes the boundary region of the rough set, whose elements cannot be characterized with certainty as belonging or not to X (by using the available information). The information about objects from the boundary region is, therefore, inconsistent or ambiguous. The cardinality of the boundary region states, moreover, the extent to which it is possible to express X in exact terms, on the basis of the available information. For this reason, this cardinality may be used as a measure of vagueness of the information about X.

Some important characteristics of the rough set approach makes it a particularly interesting tool in a variety of problems and concrete applications. For example, it is possible to deal with both quantitative and qualitative input data and inconsistencies need not to be removed prior to the analysis. In terms of the output information, it is possible to acquire a posteriori information regarding the relevance of particular attributes and their subsets to the quality of approximation considered within the problem at hand. Moreover, the lower and upper approximations of a partition of U into decision classes, prepare the ground for inducing certain and possible knowledge patterns in the form of "if... then..." decision rules.

Several attempts have been made to employ rough set theory for decision aiding (Slowinski, 1993; Pawlak & Slowinski, 1994). The Indiscernibility-based Rough Set Approach (IRSA) is not able, however, to deal with preference ordered attribute scales and preference ordered decision classes. In multicriteria decision analysis, an attribute with a preference ordered scale (value set) is called a criterion.

An extension of the IRSA which deals with inconsistencies with respect to dominance principle, typical for preference data, was proposed by Greco, Matarazzo & Slowinski (1998a, 1999a,b). This extension, called the Dominance-based Rough Set Approach (DRSA) is mainly based on the substitution of the indiscernibility relation by a dominance relation in the rough approximation of decision classes. An important consequence of this fact is the possibility of inferring (from observations of past decisions or from exemplary decisions) the DM's preference model in terms of decision rules which are logical statements of the type "if..., then...". The separation of certain and uncertain knowledge about the DM's preferences is carried out by the distinction of different kinds of decision rules, depending upon whether they are induced from lower approximations of decision classes or from the difference between upper and lower approximations (composed of inconsistent examples). Such a preference model is more general than the classical functional models considered within multi-attribute utility theory or the relational models considered, for example, in outranking methods (Greco et al., 2002c, 2004; Slowinski et al., 2002b).

This paper is a review based on previous publications. In the next section, we present some basics on the Indiscernibility-based Rough Set Approach (IRSA) as well as on its extension to similarity relation. In Section 3, we explain the need of replacing indiscernibility or similarity relation by dominance relation in the definition of rough sets, when considering preference data. This leads us to Section 4, where Dominace-based Rough Set Approach (DRSA) is presented with respect to multicriteria ordinal classification. This section also includes two special versions of DRSA: Variable Consistency DRSA (VC-DRSA) and Stochastic DRSA. Section 5 presents DRSA with respect to multicriteria choice and ranking. In Sections 4 and 5, application of DRSA to all three categories of multicriteria decision problems is explained by the way of examples. Section 6 groups conclusions and characterizes other relevant extensions and applications of DRSA to decision problems. Finally, Section 7 provides information about additional sources of information about rough set theory and applications.

2 SOME BASICS ON INDISCERNIBILITY-BASED ROUGH SET APPROACH (IRSA)

2.1 Definition of rough approximations by IRSA

For algorithmic reasons, we supply the information regarding the objects in the form of a data table, whose separate rows refer to distinct objects and whose columns refer to the different attributes considered. Each cell of this table indicates an evaluation (quantitative or qualitative) of the object placed in that row by means of the attribute in the corresponding column.

Formally, a data table is the 4-tuple S  , where U is a finite set of objects (universe),

, where U is a finite set of objects (universe),  is a finite set of attributes, Vq is the value set of the attribute q,

is a finite set of attributes, Vq is the value set of the attribute q,  and

and  is a total function such that

is a total function such that  for each

for each  ,

,  , called the information function.

, called the information function.

Each object x of U is described by a vector (string)

called the description of x in terms of the evaluations of the attributes from Q. It represents the available information about x.

To every (non-empty) subset of attributes P we associate an indiscernibility relation on U, denoted by Ip and defined as follows:

If  , we say that the objects x and y are P-indiscernible. Clearly, the indiscernibility relation thus defined is an equivalence relation (reflexive, symmetric and transitive). The family of all the equivalence classes of the relation Ip is denoted by

, we say that the objects x and y are P-indiscernible. Clearly, the indiscernibility relation thus defined is an equivalence relation (reflexive, symmetric and transitive). The family of all the equivalence classes of the relation Ip is denoted by  and the equivalence class containing an object

and the equivalence class containing an object  is denoted by

is denoted by  . The equivalence classes of the relation Ip are called the P-elementary sets or granules of knowledge encoded by P.

. The equivalence classes of the relation Ip are called the P-elementary sets or granules of knowledge encoded by P.

Let S be a data table, X be a non-empty subset of U and Æ  . The set X may be characterized by two ordinary sets, called the P-lower approximation of X (denoted by

. The set X may be characterized by two ordinary sets, called the P-lower approximation of X (denoted by  ) and the P-upper approximation of X (denoted by

) and the P-upper approximation of X (denoted by  ) in S. They can be defined, respectively, as:

) in S. They can be defined, respectively, as:

The family of all the sets  having the same P-lower and P-upper approximations is called a P-rough set. The elements of

having the same P-lower and P-upper approximations is called a P-rough set. The elements of  are all and only those objects

are all and only those objects  which belong to the equivalence classes generated by the indiscernibility relation Ip contained in X. The elements of

which belong to the equivalence classes generated by the indiscernibility relation Ip contained in X. The elements of  are all and only those objects

are all and only those objects  which belong to the equivalence classes generated by the indiscernibility relation Ip containing at least one object X belonging to X. In other words,

which belong to the equivalence classes generated by the indiscernibility relation Ip containing at least one object X belonging to X. In other words,  is the largest union of the P-elementary sets included in X, while

is the largest union of the P-elementary sets included in X, while  is the smallest union of the P-elementary sets containing X.

is the smallest union of the P-elementary sets containing X.

The lower and upper approximations can be written in an equivalent form, in terms of unions of elementary sets as follows:

The P-boundary of X in S, denoted by  , is defined as:

, is defined as:

The term rough approximation is a general term used to express the operation of the P-lower and P-upper approximation of a set or of a union of sets. The rough approximations obey the following basic laws (cf. Pawlak, 1991):

-

the inclusion property:

,

, -

the complementarity property:

Directly from the definitions, we can also get the following properties of the P-lower and P-upper approximations (Pawlak, 1982, 1991):

Therefore, if an object x belongs to  , it is also certainly contained in X, while if x belongsto

, it is also certainly contained in X, while if x belongsto  , it is only possibly contained in X.

, it is only possibly contained in X.  constitutes the doubtful region of X: using the knowledge encoded by P nothing can be said with certainty about the inclusion of its elements in set X.

constitutes the doubtful region of X: using the knowledge encoded by P nothing can be said with certainty about the inclusion of its elements in set X.

If the P-boundary of X is empty (i.e.  Æ) then the set X is an ordinary set, called the P-exact set. By this, we mean that it may be expressed as the union of some P-elementary sets. Otherwise, if

Æ) then the set X is an ordinary set, called the P-exact set. By this, we mean that it may be expressed as the union of some P-elementary sets. Otherwise, if  Æ, then the set X is a P-rough set and may be characterized by means of

Æ, then the set X is a P-rough set and may be characterized by means of  and

and  .

.

The following ratio defines an accuracy measure of the approximation of  by means of the attributes from P:

by means of the attributes from P:  , where

, where  denotes the cardinality of a (finite) set Y. Obviously,

denotes the cardinality of a (finite) set Y. Obviously,  . If

. If  , then X is a P-exact set. If

, then X is a P-exact set. If  , then X is a P-rough set.

, then X is a P-rough set.

Another ratio defines a quality measure of the approximation of  by means of the attributes from P:

by means of the attributes from P:  . The quality

. The quality  represents the relative frequency of the objects correctly assigned by means of the attributes from P. Moreover,

represents the relative frequency of the objects correctly assigned by means of the attributes from P. Moreover,  , and

, and  iff

iff  , while

, while  iff

iff  .

.

The definition of approximations of a subset  can be extended to a classification, i.e. a partition

can be extended to a classification, i.e. a partition  of U. The subsets

of U. The subsets  ,

,  , are disjunctive classes of Y. By the P-lower and P-upper approximations of Y in S we mean the sets

, are disjunctive classes of Y. By the P-lower and P-upper approximations of Y in S we mean the sets  and

and  , respectively. The coefficient

, respectively. The coefficient  is called the quality of approximation of classification Y by the set of attributes P, or in short, the quality of classification. It expresses the ratio of all P-correctly classified objects to all objects in the data table.

is called the quality of approximation of classification Y by the set of attributes P, or in short, the quality of classification. It expresses the ratio of all P-correctly classified objects to all objects in the data table.

The main issue in rough set theory is the approximation of subsets or partitions of U, representing knowledge about U, with other sets or partitions that have been built up using available information about U. From the perspective of a particular object  , it may be interesting, however, to use the available information to assess the degree of its membership to a subset X of U. The subset X can be identified with the knowledge to be approximated. Using the rough set approach one can calculate the membership function

, it may be interesting, however, to use the available information to assess the degree of its membership to a subset X of U. The subset X can be identified with the knowledge to be approximated. Using the rough set approach one can calculate the membership function  (rough membership function) as

(rough membership function) as

The value of  may be interpreted analogously as conditional probability and may be understood as the degree of certainty (credibility) to which x belongs to X. Observe that the value of the membership function is calculated from the available data, and not subjectively assumed, as it is in the case of membership functions of fuzzy sets.

may be interpreted analogously as conditional probability and may be understood as the degree of certainty (credibility) to which x belongs to X. Observe that the value of the membership function is calculated from the available data, and not subjectively assumed, as it is in the case of membership functions of fuzzy sets.

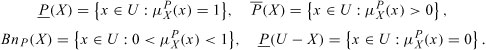

Between the rough membership function and the rough approximations of X the following relationships hold:

In rough set theory there is, therefore, a close link between the granularity connected with the rough approximation of sets and the uncertainty connected with the rough membership of objects to sets.

A very important concept for concrete applications is that of the dependence of attributes. Intuitively, a set of attributes

totally depends upon a set of attributes if all the values of the attributes from T are uniquely determined by the values of the attributes from P. In other words, this is the case if a functional dependence exists between evaluations by the attributes from P and by the attributes from T. This means that the partition (granularity) generated by the attributes from P is at least as "fine" as that generated by the attributes from T, so that it is sufficient to use the attributes from P to build the partition

if all the values of the attributes from T are uniquely determined by the values of the attributes from P. In other words, this is the case if a functional dependence exists between evaluations by the attributes from P and by the attributes from T. This means that the partition (granularity) generated by the attributes from P is at least as "fine" as that generated by the attributes from T, so that it is sufficient to use the attributes from P to build the partition  . Formally, T totally depends on

. Formally, T totally depends on  iff

iff  .

.

Therefore, T is totally (partially) dependent on P if all (some) objects of the universe U may be univocally assigned to granules of the partition  , using only the attributes from P.

, using only the attributes from P.

Another issue of great practical importance is that of knowledge reduction. This concerns the elimination of superfluous data from the data table, without deteriorating the information contained in the original table.

Let  and

and  . It is said that attribute p is superfluous in P if

. It is said that attribute p is superfluous in P if  ; otherwise, p is indispensable in P.

; otherwise, p is indispensable in P.

The set P is independent if all its attributes are indispensable. The subset P' of P is a reduct of P (denoted by  ) if P' is independent and

) if P' is independent and  .

.

A reduct of P may also be defined with respect to an approximation of the classification Y of objects from U. It is then called a Y-reduct of P (denoted by  ) and it specifies a minimal (with respect to inclusion) subset P' of P which keeps the quality of the classification unchanged, i.e.

) and it specifies a minimal (with respect to inclusion) subset P' of P which keeps the quality of the classification unchanged, i.e.  . In other words, the attributes that do not belong to a Y-reduct of P are superfluous with respect to the classification Y of objects from U.

. In other words, the attributes that do not belong to a Y-reduct of P are superfluous with respect to the classification Y of objects from U.

More than one Y-reduct (or reduct) of P may exist in a data table. The set containing all the indispensable attributes of P is known as the Y-core (denoted by  ). In formal terms,

). In formal terms,  . Obviously, since the Y-core is the intersection of all the Y-reducts of P, it is included in every Y-reduct of P. It is the most important subset of attributes of Q, because none of its elements can be removed without deteriorating the quality ofthe classification.

. Obviously, since the Y-core is the intersection of all the Y-reducts of P, it is included in every Y-reduct of P. It is the most important subset of attributes of Q, because none of its elements can be removed without deteriorating the quality ofthe classification.

2.2 Decision rules induced from rough approximations

In a data table the attributes of the set Q are often divided into condition attributes (set  Æ) and decision attributes (set

Æ) and decision attributes (set  Æ). Note that

Æ). Note that  and

and  Æ. Such a table is called a decision table. The decision attributes induce a partition of U deduced from the indiscernibility relation ID in a way that is independent of the condition attributes. D-elementary sets are called decision classes. There is a tendency to reduce the set C while keeping all important relationships between C and D, in order to make decisions on the basis of a smaller amount of information. When the set of condition attributes is replaced by one of its reducts, the quality of approximation of the classification induced by the decision attributes does not deteriorate.

Æ. Such a table is called a decision table. The decision attributes induce a partition of U deduced from the indiscernibility relation ID in a way that is independent of the condition attributes. D-elementary sets are called decision classes. There is a tendency to reduce the set C while keeping all important relationships between C and D, in order to make decisions on the basis of a smaller amount of information. When the set of condition attributes is replaced by one of its reducts, the quality of approximation of the classification induced by the decision attributes does not deteriorate.

Since the tendency is to underline the functional dependencies between condition and decision attributes, a decision table may also be seen as a set of decision rules. These are logical statements of the type " if..., then...", where the antecedent (condition part) specifies values assumed by one or more condition attributes (describing C-elementary sets) and the consequence (decision part) specifies an assignment to one or more decision classes (describing D-elementary sets). Therefore, the syntax of a rule can be outlined as follows:

if f(x, q1) is equal to rq1 and

is equal to rq2 and... f(x, qp) is equal to rqp, then x belongs to Yj1 or Yj2 or ...Yjk,

where  and Yj1, Yj2,..., Yjk are some decision classes of the considered classification (D-elementary sets). If there is only one possible consequence, i.e. k = 1, then the rule is said to be certain, otherwise it is said to be approximate or ambiguous.

and Yj1, Yj2,..., Yjk are some decision classes of the considered classification (D-elementary sets). If there is only one possible consequence, i.e. k = 1, then the rule is said to be certain, otherwise it is said to be approximate or ambiguous.

An object

supports decision rule r if its description is matching both the condition part and the decision part of the rule. We also say that decision rule r covers object x if it matches at least the condition part of the rule. Each decision rule is characterized by its strength defined as the number of objects supporting the rule. In the case of approximate rules, the strength is calculated for each possible decision class separately.Let us observe that certain rules are supported only by objects from the lower approximation of the corresponding decision class. Approximate rules are supported, in turn, only by objects from the boundaries of the corresponding decision classes.

Procedures for the generation of decision rules from a decision table use an inductive learning principle. The objects are considered as examples of decisions. In order to induce decision rules with a unique consequent assignment to a D-elementary set, the examples belonging to the D-elementary set are called positive and all the others negative. A decision rule is discriminant if it is consistent (i.e. if it distinguishes positive examples from negative ones) and minimal (i.e. if removing any attribute from a condition part gives a rule covering negative objects). It may be also interesting to look for partly discriminant rules. These are rules that, besides positive examples, could cover a limited number of negative ones. They are characterized by a coefficient, called the level of confidence, which is the ratio of the number of positive examples (supporting the rule) to the number of all examples covered by the rule.

The generation of decision rules from decision tables is a complex task and a number of procedures have been proposed to solve it (see, for example, Grzymala-Busse, 1992, 1997; Skowron, 1993; Ziarko & Shan, 1994; Skowron & Polkowski, 1997; Stefanowski, 1998; Slowinski, Stefanowski, Greco & Matarazzo, 2000). The existing induction algorithms use one of the following strategies:

(a) The generation of a minimal set of rules covering all objects from a decision table.

(b) The generation of an exhaustive set of rules consisting of all possible rules for a decision table.

(c) The generation of a set of 'strong' decision rules, even partly discriminant, covering relatively many objects from the decision table (but not necessarily all of them).

To summarize the above description of IRSA, let us list particular benefits one can get when applying the rough set approach to analysis of data presented in decision tables:

-

a characterization of decision classes in terms of chosen attributes through lower and upper approximation,

-

a measure of the quality of approximation which indicates how good the chosen set of attributes is for approximation of the classification,

-

a reduction of the knowledge contained in the table to a description by relevant attributes

i.e. those belonging to reducts; at the same time, exchangeable and superfluous attributes are also identified,

-

a core of attributes, being an intersection of all reducts, indicates indispensable attributes,

-

a set of decision rules which is induced from the lower and upper approximations of the decision classes; this shows classification patterns which exist in the data set.

A tutorial example illustrating all these benefits has been given in (Slowinski et al., 2005). For more details about IRSA and its extensions, the reader is referred to Pawlak (1991), Polkowski (2002), Slowinski (1992b) and many others (see Section 7). Internet addresses to freely available software implementations of these algorithms can also be found in the last section of this paper.

2.3 From indiscernibility to similarity

As mentioned above, the classical definitions of lower and upper approximations are based on the use of the binary indiscernibility relation which is an equivalence relation. The indiscernibility implies the impossibility of distinguishing between two objects of U having the same description in terms of the attributes from Q. This relation induces equivalence classes on U, which constitute the basic granules of knowledge. In reality, due to the imprecision of data describing the objects, small differences are often not considered significant for the purpose of discrimination. This situation may be formally modeled by considering similarity or tolerance relations (see e.g. Nieminen, 1988; Marcus, 1994; Slowinski, 1992a; Polkowski, Skowron & Zytkow, 1995; Skowron & Stepaniuk, 1995; Slowinski & Vanderpooten, 1995, 2000; Stepaniuk, 2000; Yao & Wong, 1995).

Replacing the indiscernibility relation by a weaker binary similarity relation has considerably extended the capacity of the rough set approach. This is because, in the least demanding case, the similarity relation requires reflexivity only, relaxing the assumptions of symmetry and transitivity of the indiscernibility relation.

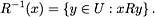

In general, a similarity relation R does not generate a partition but a cover of U. The information regarding similarity may be represented using similarity classes for each object  . More precisely, the similarity class of x, denoted by R(x), consists of the set of objects which are similar to x:

. More precisely, the similarity class of x, denoted by R(x), consists of the set of objects which are similar to x:

It is obvious that an object y may be similar to both x and z, while z is not similar to x, i.e.  and

and  , but

, but  ,

,  . The similarity relation is of course reflexive (each object is similar to itself). Slowinski & Vanderpooten (1995, 2000) have proposed a similarity relation which is only reflexive. The abandonment of the transitivity requirement is easily justifiable. For example, see Luce's paradox of the cups of tea (Luce, 1956). As for the symmetry, one should notice that yRx, which means "y is similar to x", is directional. There is a subject y and a referent x, and in general this is not equivalent to the proposition "x is similar to y", as maintained by Tversky (1977). This is quite immediate when the similarity relation is defined in terms of a percentage difference between evaluations of the objects compared on a numerical attribute in hand, calculated with respect to evaluation of the referent object. Therefore, the symmetry of the similarity relation should not be imposed. It then makes sense to consider the inverse relation of R, denoted by R-1, where xR-1y means again "y is similar to x". R-1(x),

. The similarity relation is of course reflexive (each object is similar to itself). Slowinski & Vanderpooten (1995, 2000) have proposed a similarity relation which is only reflexive. The abandonment of the transitivity requirement is easily justifiable. For example, see Luce's paradox of the cups of tea (Luce, 1956). As for the symmetry, one should notice that yRx, which means "y is similar to x", is directional. There is a subject y and a referent x, and in general this is not equivalent to the proposition "x is similar to y", as maintained by Tversky (1977). This is quite immediate when the similarity relation is defined in terms of a percentage difference between evaluations of the objects compared on a numerical attribute in hand, calculated with respect to evaluation of the referent object. Therefore, the symmetry of the similarity relation should not be imposed. It then makes sense to consider the inverse relation of R, denoted by R-1, where xR-1y means again "y is similar to x". R-1(x),  , is the class of referent objects to which x is similar:

, is the class of referent objects to which x is similar:

Given a subset  and a similarity relation R on U, an object

and a similarity relation R on U, an object  is said to be non-ambiguous in each of the two following cases:

is said to be non-ambiguous in each of the two following cases:

-

x belongs to

X without ambiguity, that is

and

and  ; such objects are also called

; such objects are also called positive;

-

x does not belong to

X without ambiguity (

x clearly does not belong to

X), that is

and

and  (or

(or  Æ); such objects are also called

Æ); such objects are also called negative.

The objects which are neither positive nor negative are said to be ambiguous. A more general definition of lower and upper approximation may thus be offered (see Slowinski & Vanderpooten, 2000). Let  and let R be a reflexive binary relation defined on U. The lower approximation of X, denoted by

and let R be a reflexive binary relation defined on U. The lower approximation of X, denoted by  , and the upper approximation of X, denoted by

, and the upper approximation of X, denoted by  , are defined, respectively, as:

, are defined, respectively, as:

It may be demonstrated that the key properties - inclusion and complementarity - still hold and that

Moreover, the above definition of rough approximation is the only one that correctly characterizes the set of positive objects (lower approximation) and the set of positive or ambiguous objects (upper approximation) when a similarity relation is reflexive, but not necessarily symmetric nor transitive.

Using a similarity relation, we are able to induce decision rules from a decision table. The syntax of a rule is represented as follows:

If f(x, q1) is similar to rq1 and f(x, q2) is similar to rq2 and... f(x, qp) is similar to rqp, then x belongs to Yj1 or Yj2 or... Yjk,

where  and Yj1, Yj2,..., Yjk are some classes of the considered classification (D-elementary sets). As mentioned above, if k = 1 then the rule is certain, otherwise it is approximate or ambiguous. Procedures for generation of decision rules follow the induction principle described in point 2.2. One such procedure has been proposed by Krawiec, Slowinski & Vanderpooten (1998) - it involves a similarity relation that is learned from data. We would also like to point out that Greco, Matarazzo & Slowinski (1998b, 2000b) proposed a fuzzy extension of the similarity, that is, rough approximation of fuzzy sets (decision classes) by means of fuzzy similarity relations (reflexive only).

and Yj1, Yj2,..., Yjk are some classes of the considered classification (D-elementary sets). As mentioned above, if k = 1 then the rule is certain, otherwise it is approximate or ambiguous. Procedures for generation of decision rules follow the induction principle described in point 2.2. One such procedure has been proposed by Krawiec, Slowinski & Vanderpooten (1998) - it involves a similarity relation that is learned from data. We would also like to point out that Greco, Matarazzo & Slowinski (1998b, 2000b) proposed a fuzzy extension of the similarity, that is, rough approximation of fuzzy sets (decision classes) by means of fuzzy similarity relations (reflexive only).

3 THE NEED OF REPLACING INDISCERNIBILITY RELATION BY DOMINANCE RELATION WHEN REASONING ABOUT PREFERENCE DATA

When trying to apply the rough set concept based on indisceribility or similarity to reasoning about preference ordered data, it has been noted that IRSA ignores not only the preference order in the value sets of attributes but also the monotonic relationship between evaluations of objects on such attributes (called criteria) and the preference ordered value of decision (classification decision or degree of preference) (see Greco, Matarazzo & Slowinski, 1998a, 1999b, 2001a; Slowinski, Greco & Matarazzo, 2000a).

In order to explain how important is the above monotonic relationship for data describing multicriteria decision problems, let us consider an example of a data set concerning pupils' achievements in a high school. Suppose that among the attributes describing the pupils there are results in Mathematics (Math) and Physics (Ph). There is also a General Achievement (GA) result, which is considered as a classification decision. The domains of all three attributes are composed of three values: bad, medium and good. The preference order of the attribute values is obvious: good is better than medium and bad, and medium is better than bad. Such attributes are called criteria because they involve an evaluation. One can also notice a semantic correlation between the two criteria and the classification decision, which means that an improvement on one criterion should not worsen the classification decision, while the other criterion is unchanged. Precisely, an improvement of a pupil's score in Math or Ph, with other criterion value unchanged, should not worsen the pupil's general achievement (GA), but rather improve it. In general terms, this requirement is concordant with the dominance principle definedin the Introduction.

This semantic correlation is also called monotonicity constraint, and thus, an alternative name of the classification problem with semantic correlation between evaluation criteria and classification decision is ordinal classification with monotonicity constraints.

Two questions naturally follow consideration of this example:

-

What classification rules can be drawn from the pupils' data set?

-

How does the semantic correlation influences the classification rules?

The answer to the first question is: monotonic "if..., then..." decision rules. Each decision rule is characterized by a condition profile and a decision profile, corresponding to vectors of threshold values on evaluation criteria and on classification decision, respectively. The answer to the second question is that condition and decision profiles of a decision rule should observe the dominance principle (monotonicity constraint) if the rule has at least one pair of semantically correlated criteria spanned over the condition and decision part. We say that one profile dominates another if the values of criteria of the first profile are not worse than the values of criteria of the second profile.

Let us explain the dominance principle with respect to decision rules on the pupils' example. Suppose that two rules induced from the pupils' data set relate Math and Ph on the condition side, with GA on the decision side:

rule#1: if Math=medium and Ph=medium, then GA=good,

rule#2: if Math=good and Ph=medium, then GA=medium.

The two rules do not observe the dominance principle because the condition profile of rule #2 dominates the condition profile of rule #1, while the decision profile of rule #2 is dominated by the decision profile of rule #1. Thus, in the sense of the dominance principle, the two rules are inconsistent, i.e. they are wrong.

One could say that the above rules are true because they are supported by examples of pupils from the analyzed data set, but this would mean that the examples are also inconsistent. The inconsistency may come from many sources. Examples include:

-

Missing attributes (regular ones or criteria) in the description of objects. Maybe the data set does not include such attributes as the

opinion of the pupil's tutor expressed only verbally during an assessment of the pupil's

GA by a school assessment committee.

-

Unstable preferences of decision makers. Maybe the members of the school assessment committee changed their view on the influence of

Math on

GA during the assessment.

Handling these inconsistencies is of crucial importance for knowledge discovery about preferences. They cannot be simply considered as noise or error to be eliminated from data, or amalgamated with consistent data by some averaging operators. They should be identified and presented as uncertain rules.

If the semantic correlation was ignored in prior knowledge, then the handling of the above mentioned inconsistencies would be impossible. Indeed, there would be nothing wrong with rules #1 and #2. They would be supported by different examples discerned by considered attributes.

It has been acknowledged by many authors that rough set theory provides an excellent framework for dealing with inconsistencies in knowledge discovery (Grzymala-Busse, 1992; Pawlak, 1991; Pawlak, Grzymala-Busse, Slowinski & Ziarko, 1995; Polkowski, 2002; Polkowski & Skowron, 1999; Slowinski, 1992b; Slowinski & Zopounidis, 1995; Ziarko, 1998). As we have shown in Section 2, the paradigm of rough set theory is that of granular computing, because the main concept of the theory (rough approximation of a set) is built up of blocks of objects which are indiscernible by a given set of attributes, called granules of knowledge. In the space of regular attributes, the indiscernibility granules are bounded sets. Decision rules induced from rough approximation of a classification are also built up of such granules.

The authors have proposed an extension of the granular computing paradigm that enables us to take into account prior knowledge, either about evaluation of objects on multiple criteria only (Greco, Matarazzo, Slowinski & Stefanowski, 2002), or about multicriteria evaluation with monotonicity constraints (Greco, Matarazzo & Slowinski, 1998a, 1999b, 2000d, 2001a, 2002a, 2002b; Slowinski, Greco & Matarazzo, 2002a, 2009). The combination of the new granules with the idea of rough approximation is called the Dominance-based Rough Set Approach (DRSA).

In the following, we present the concept of granules which permit us to handle prior knowledge about multicriteria evaluation with monotonicity constraints when inducing decision rules.

Let U be a finite set of objects (universe) and let Q be a finite set of attributes divided into a set C of condition attributes and a set D of decision attributes, where  Æ. Also, let

Æ. Also, let

be attribute spaces corresponding to sets of condition and decision attributes, respectively. The elements of XC and XD can be interpreted as possible evaluations of objects on attributes from set  and from set

and from set  , respectively. Therefore, Xq is the set of possible evaluations of considered objects with respect to attribute q. The value of object x on attribute

, respectively. Therefore, Xq is the set of possible evaluations of considered objects with respect to attribute q. The value of object x on attribute  is denoted by Xq. Objects x and y are indiscernible by

is denoted by Xq. Objects x and y are indiscernible by  if

if  for all

for all  and, analogously, objects x and y are indiscernible by

and, analogously, objects x and y are indiscernible by  if

if  for all

for all  . The sets of indiscernible objects are equivalence classes of the corresponding indiscernibility relation Ip or IR. Moreover, Ip(x) and IR(x) denote equivalence classes including object x. ID generates a partition of U into a finite number of decision classes

. The sets of indiscernible objects are equivalence classes of the corresponding indiscernibility relation Ip or IR. Moreover, Ip(x) and IR(x) denote equivalence classes including object x. ID generates a partition of U into a finite number of decision classes  . Each

. Each  belongs to one and only one class

belongs to one and only one class  .

.

The above definitions are valid for regular attributes, not involving monotonicity relationships between values of condition and decision attributes. In this case, the granules of knowledge are bounded sets in XP and XR ( and

and  ), defined by partitions of U induced by the indiscernibility relations Ip and IR, respectively. Then, classification rules to be discovered are functions representing granules IR(x) by granules Ip(x) in the condition attribute space Xp, for any

), defined by partitions of U induced by the indiscernibility relations Ip and IR, respectively. Then, classification rules to be discovered are functions representing granules IR(x) by granules Ip(x) in the condition attribute space Xp, for any  and for any

and for any  .

.

If value sets of some condition and decision attributes are preference ordered (i.e. they are evaluation criteria), and there are known monotonic relationships between value sets of these condition and decision attributes, then the indiscernibility relation is unable to produce granules in XC and XD that would take into account the preference order. To do so, the indiscernibility relation has to be substituted by a dominance relation in  and

and  (

( and

and  ). Suppose, for simplicity, that all condition attributes in C and all decision attributes in D are criteria, and that C and D are semantically correlated.

). Suppose, for simplicity, that all condition attributes in C and all decision attributes in D are criteria, and that C and D are semantically correlated.

Let  be a weak preference relation on U (often called outranking) representing a preference on the set of objects with respect to criterion

be a weak preference relation on U (often called outranking) representing a preference on the set of objects with respect to criterion  . Now,

. Now,  means "xq is at least as good as yq with respect to criterion q". On the one hand, we say that x dominates y with respect to

means "xq is at least as good as yq with respect to criterion q". On the one hand, we say that x dominates y with respect to  (shortly, xP-dominates y) in the condition attribute space XP (denoted by

(shortly, xP-dominates y) in the condition attribute space XP (denoted by  ) if

) if  for all

for all  . Assuming, without loss of generality, that the domains of the criteria are numerical (i.e.

. Assuming, without loss of generality, that the domains of the criteria are numerical (i.e.  for any

for any  ) and that they are ordered so that the preference increases with the value, we can say that

) and that they are ordered so that the preference increases with the value, we can say that  is equivalent to

is equivalent to  for all

for all  ,

,  . Observe that for each

. Observe that for each  , xDpx, i.e. P-dominance is reflexive. On the other hand, the analogous definition holds in the decision attribute space XR (denoted by xDRy), where

, xDpx, i.e. P-dominance is reflexive. On the other hand, the analogous definition holds in the decision attribute space XR (denoted by xDRy), where  .

.

The dominance relations xDpy and xDRx ( and

and  ) are directional statements where x is a subject and y is a referent.

) are directional statements where x is a subject and y is a referent.

If  is the referent, then one can define a set of objects

is the referent, then one can define a set of objects  dominating x, called the P-dominating set (denoted by

dominating x, called the P-dominating set (denoted by  ) and defined as

) and defined as  .

.

If  is the subject, then one can define a set of objects

is the subject, then one can define a set of objects  dominated by x, called the P-dominated set (denoted by

dominated by x, called the P-dominated set (denoted by  ) and defined as

) and defined as  .

.

P-dominating sets  and P-dominated sets

and P-dominated sets  correspond to positive and negative dominance cones in XP, with the origin x.

correspond to positive and negative dominance cones in XP, with the origin x.

With respect to the decision attribute space XR (where  ), the R-dominance relation enables us to define the following sets:

), the R-dominance relation enables us to define the following sets:

is a decision class with respect to

is a decision class with respect to  .

.  is called the upward union of classes, and

is called the upward union of classes, and  is the downward union of classes. If

is the downward union of classes. If  , then

, then  belongs to class

belongs to class  ,

,  , or better, on each decision attribute

, or better, on each decision attribute  . On the other hand, if

. On the other hand, if  , then x belongs to class

, then x belongs to class  ,

,  , or worse, on each decision attribute

, or worse, on each decision attribute  . The downward and upward unions of classes correspond to the positive and negative dominance cones in XR, respectively.

. The downward and upward unions of classes correspond to the positive and negative dominance cones in XR, respectively.

In this case, the granules of knowledge are open sets in XP and XR defined by dominance cones  ,

,

and

and  ,

,

, respectively. Then, classification rules to be discovered are functions representing granules

, respectively. Then, classification rules to be discovered are functions representing granules  ,

,  by granules

by granules  ,

,  , respectively, in the condition attribute space XP, for any

, respectively, in the condition attribute space XP, for any  and

and  and for any

and for any  .

.

4 THE DOMINANCE-BASED ROUGH SET APPROACH (DRSA) TO MULTICRITERIA ORDINAL CLASSIFICATION

4.1 Granular computing with dominance cones

When discovering classification rules, a set D of decision attributes is, usually, a singleton,  . Let us take this assumption for further presentation, although it is not necessary for the Dominance-based Rough Set Approach. The decision attribute d makes a partition of U into a finite number of classes,

. Let us take this assumption for further presentation, although it is not necessary for the Dominance-based Rough Set Approach. The decision attribute d makes a partition of U into a finite number of classes,  . Each object

. Each object  belongs to one and only one class,

belongs to one and only one class,  . The upward and downward unions of classes boil down, respectively, to:

. The upward and downward unions of classes boil down, respectively, to:

where  . Notice that for

. Notice that for  we have

we have  , i.e. all the objects not belonging to class Clt or better, belong to class

, i.e. all the objects not belonging to class Clt or better, belong to class  or worse.

or worse.

Let us explain how the rough set concept has been generalized to the Dominance-based Rough Set Approach in order to enable granular computing with dominance cones (for more details, see Greco, Matarazzo & Slowinski (1998a, 1999b, 2000d, 2001a, 2002a), Slowinski, Greco & Matarazzo (2009), Slowinski, Stefanowski, Greco & Matarazzo (2000)).

Given a set of criteria,  , the inclusion of an object

, the inclusion of an object  to the upward union of classes

to the upward union of classes  , = 2,..., n, is inconsistent with the dominance principle if one of the following conditions holds:

, = 2,..., n, is inconsistent with the dominance principle if one of the following conditions holds:

-

x belongs to class

or better but it is

or better but it is P-dominated by an object

y belonging to a class worse than

,

, i.e.

but

but  Æ,

Æ, -

x belongs to a worse class than

but it

but it P-dominates an object

y belonging to class

or better,

or better, i.e.

but

but  Æ.

Æ.

If, given a set of criteria  , the inclusion of

, the inclusion of  to

to  , where

, where  , is inconsistent with the dominance principle, we say that

, is inconsistent with the dominance principle, we say that  belongs to

belongs to  with some ambiguity. Thus,

with some ambiguity. Thus,  belongs to

belongs to  without any ambiguity with respect to

without any ambiguity with respect to  , if

, if  and there is no inconsistency with the dominance principle. This means that all objects P-dominating

and there is no inconsistency with the dominance principle. This means that all objects P-dominating  belong to

belong to  , i.e.

, i.e.  . Geometrically, this corresponds to the inclusion of the complete set of objects contained in the positive dominance cone originating in

. Geometrically, this corresponds to the inclusion of the complete set of objects contained in the positive dominance cone originating in  , in the positive dominance cone

, in the positive dominance cone  originating in

originating in  .

.

Furthermore,  possibly belongs to

possibly belongs to  with respect to

with respect to  if one of the following conditions holds:

if one of the following conditions holds:

-

according to decision attribute

d,

x belongs to

-

according to decision attribute

d,

x does not belong to

y belonging to

In terms of ambiguity, x possibly belongs to  with respect to

with respect to  , if x belongs to

, if x belongs to  with or without any ambiguity. Due to the reflexivity of the

with or without any ambiguity. Due to the reflexivity of the  -dominance relation

-dominance relation  , the above conditions can be summarized as follows: xpossibly belongs to class

, the above conditions can be summarized as follows: xpossibly belongs to class  or better, with respect to

or better, with respect to  , if among the objects

, if among the objects  -dominated by xthere is an object

-dominated by xthere is an object  belonging to class

belonging to class  or better, i.e.

or better, i.e.

Geometrically, this corresponds to the non-empty intersection of the set of objects contained in the negative dominance cone originating in  , with the positive dominance cone

, with the positive dominance cone  originating in

originating in  .

.

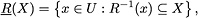

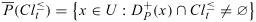

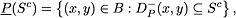

For  , the set of all objects belonging to

, the set of all objects belonging to  without any ambiguity constitutes the P-lower approximation of

without any ambiguity constitutes the P-lower approximation of  , denoted by

, denoted by  , and the set of all objects that possibly belong to

, and the set of all objects that possibly belong to  constitutes the P-upper approximation of

constitutes the P-upper approximation of  , denoted by

, denoted by  . More formally:

. More formally:

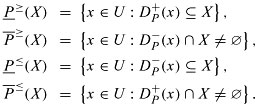

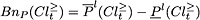

where  . Analogously, one can define the P-lower approximation and the P-upper approximation of

. Analogously, one can define the P-lower approximation and the P-upper approximation of  :

:

where  .

.

The P-lower and P-upper approximations of  ,

,  , can also be expressed in terms of unions of positive dominance cones as follows:

, can also be expressed in terms of unions of positive dominance cones as follows:

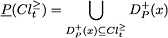

Analogously, the P-lower and P-upper approximations of  ,

,  , can be expressed in terms of unions of negative dominance cones as follows:

, can be expressed in terms of unions of negative dominance cones as follows:

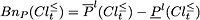

The P-lower and P-upper approximations so defined satisfy the following inclusion properties for each  and for all

and for all  :

:

All the objects belonging to  and

and  with some ambiguity constitute the P-boundary of

with some ambiguity constitute the P-boundary of  and

and  , denoted by

, denoted by  and

and  , respectively. They can be represented, in terms of upper and lower approximations, as follows:

, respectively. They can be represented, in terms of upper and lower approximations, as follows:

where  . The P-lower and P-upper approximations of the unions of classes

. The P-lower and P-upper approximations of the unions of classes  and

and  have an important complementarity property. It says that if object

have an important complementarity property. It says that if object  belongs without any ambiguity to class

belongs without any ambiguity to class  or better, then it is impossible that it could belong to class

or better, then it is impossible that it could belong to class  or worse, i.e.

or worse, i.e.

Due to the complementarity property,  , for

, for  , which means that if x belongs with ambiguity to class

, which means that if x belongs with ambiguity to class  or better, then it also belongs with ambiguity to class

or better, then it also belongs with ambiguity to class  or worse.

or worse.

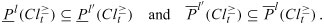

Considering application of the lower and the upper approximations based on dominance  ,

,  , to any set

, to any set  , instead of the unions of classes

, instead of the unions of classes  and

and  , one gets upward lower and upper approximations

, one gets upward lower and upper approximations  and

and  , as well as downward lower and upper approximations

, as well as downward lower and upper approximations  and

and  , as follows:

, as follows:

From the definition of rough approximations  ,

,  ,

,  and

and  , we can get also the following properties of the P-lower and P-upper approximations (see Greco, Matarazzo & Slowinski, 2007, 2012):

, we can get also the following properties of the P-lower and P-upper approximations (see Greco, Matarazzo & Slowinski, 2007, 2012):

From the knowledge discovery point of view, P-lower approximations of unions of classes represent certain knowledge provided by criteria from  , while P-upper approximations represent possible knowledge and the P-boundaries contain doubtful knowledge provided by the criteria from

, while P-upper approximations represent possible knowledge and the P-boundaries contain doubtful knowledge provided by the criteria from  .

.

4.2 Variable Consistency Dominance-based Rough set Approach

The above definitions of rough approximations are based on a strict application of the dominance principle. However, when defining non-ambiguous objects, it is reasonable to accept a limited proportion of negative examples, particularly for large data tables. This extended version of the Dominance-based Rough Set Approach is called the Variable Consistency Dominance-based Rough Set Approach (VC-DRSA) model (Greco, Matarazzo, Slowinski & Stefanowski, 2001a).

For any  , we say that

, we say that  belongs to

belongs to  with no ambiguity at consistency level

with no ambiguity at consistency level  , if

, if  and at least

and at least  of all objects

of all objects  dominating x with respect to P also belong to

dominating x with respect to P also belong to  , i.e.

, i.e.

The term  is called rough membership and can be interpreted as conditional probability

is called rough membership and can be interpreted as conditional probability  . The level l is called the consistency level because it controls the degree of consistency between objects qualified as belonging to

. The level l is called the consistency level because it controls the degree of consistency between objects qualified as belonging to  without any ambiguity. In other words, if

without any ambiguity. In other words, if  , then at most

, then at most  of all objects

of all objects  dominating x with respect to P do not belong to

dominating x with respect to P do not belong to  and thus contradict the inclusion of x in

and thus contradict the inclusion of x in  .

.

Analogously, for any  we say that

we say that  belongs to

belongs to  with no ambiguity at consistency level

with no ambiguity at consistency level  , if

, if  and at least

and at least  of all the objects

of all the objects  dominated by x with respect to P also belong to

dominated by x with respect to P also belong to  , i.e.

, i.e.

The rough membership  can be interpreted as conditional probability

can be interpreted as conditional probability  . Thus, for any

. Thus, for any  , each object

, each object  is either ambiguous or non-ambiguous at consistency level

is either ambiguous or non-ambiguous at consistency level  with respect to the upward union

with respect to the upward union  or with respect to the downward union

or with respect to the downward union  .

.

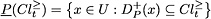

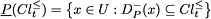

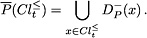

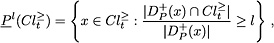

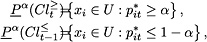

The concept of non-ambiguous objects at some consistency level  leads naturally to the definition of

leads naturally to the definition of  -lower approximations of the unions of classes

-lower approximations of the unions of classes  and

and  which can be formally presented as follows:

which can be formally presented as follows:

Given  and consistency level

and consistency level  , we can define the

, we can define the  -upper approximations of

-upper approximations of  and

and  , denoted by

, denoted by  and

and  , respectively, by complementation of

, respectively, by complementation of  and

and  with respect to

with respect to  as follows:

as follows:

can be interpreted as the set of all the objects belonging to

can be interpreted as the set of all the objects belonging to  , which are possibly ambiguous at consistency level

, which are possibly ambiguous at consistency level  . Analogously,

. Analogously,  can be interpreted as the set of all the objects belonging to

can be interpreted as the set of all the objects belonging to  , which are possibly ambiguous at consistency level

, which are possibly ambiguous at consistency level  . The

. The  -boundaries (

-boundaries ( -doubtful regions) of

-doubtful regions) of  and

and  are defined as:

are defined as:

where  . The VC-DRSA model provides some degree of flexibility in assigning objects to lower and upper approximations of the unions of decision classes. It can easily be demonstrated that for

. The VC-DRSA model provides some degree of flexibility in assigning objects to lower and upper approximations of the unions of decision classes. It can easily be demonstrated that for  and

and  ,

,

The VC-DRSA model is inspired by Ziarko's model of the variable precision rough set approach (Ziarko, 1993, 1998). However, there is a significant difference in the definition of rough approximations because  and

and  are composed of non-ambiguous and ambiguous objects at the consistency level

are composed of non-ambiguous and ambiguous objects at the consistency level  , respectively, while Ziarko's

, respectively, while Ziarko's  and

and  are composed of

are composed of  -indiscernibility sets such that at least

-indiscernibility sets such that at least  of these sets are included in

of these sets are included in  or have an non-empty intersection with

or have an non-empty intersection with  , respectively. If one would like to use Ziarko's definition of variable precision rough approximations in the context of multiple-criteria classification, then the

, respectively. If one would like to use Ziarko's definition of variable precision rough approximations in the context of multiple-criteria classification, then the  -indiscernibility sets should be substituted by

-indiscernibility sets should be substituted by  -dominating sets

-dominating sets  . However, then the notion of ambiguity that naturally leads to the general definition of rough approximations (see Slowinski & Vanderpooten (2000)) loses its meaning. Moreover, a bad side effect of the direct use of Ziarko's definition is that a lower approximation

. However, then the notion of ambiguity that naturally leads to the general definition of rough approximations (see Slowinski & Vanderpooten (2000)) loses its meaning. Moreover, a bad side effect of the direct use of Ziarko's definition is that a lower approximation  may include objects

may include objects  assigned to

assigned to  , where

, where  is much less than

is much less than  , if

, if  belongs to

belongs to  , which was included in

, which was included in  . When the decision classes are preference ordered, it is reasonable to expect that objects assigned to far worse classes than the considered union are not counted to the lower approximation of this union.

. When the decision classes are preference ordered, it is reasonable to expect that objects assigned to far worse classes than the considered union are not counted to the lower approximation of this union.

The VC-DRSA model presented above has been generalized in (Greco, Matarazzo & Slowinski, 2008b; Blaszczynski, Greco, Slowinski & Szelag, 2009). The generalized model applies two types of consistency measures in the definition of lower approximations:

-

gain-type consistency measures

-

cost-type consistency measures

:

:

where  ,

,  ,

,  ,

,  , are threshold values on the consistency measures which are conditioning the inclusion of object

, are threshold values on the consistency measures which are conditioning the inclusion of object  in the

in the  -lower approximation of

-lower approximation of  , or

, or  . Here are the consistency measures considered in (Blaszczynski, Greco, Slowinski & Szelag, 2009): for all

. Here are the consistency measures considered in (Blaszczynski, Greco, Slowinski & Szelag, 2009): for all  and

and

with

being cost-type consistency measures and

being cost-type consistency measures.

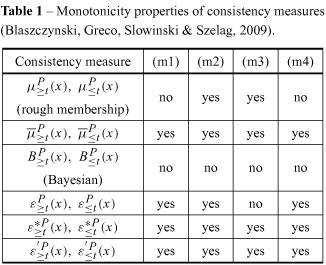

To be concordant with the rough set philosophy, consistency measures should enjoy some monotonicity properties (see Table 1). A consistency measure is monotonic if it does not decrease (or does not increase) when:

(m1) the set of attributes is growing,

(m2) the set of objects is growing,

(m3) the union of ordered classes is growing,

(m4)

As to the consistency measures  and

and  which enjoy all four monotonicity properties, they can be interpreted as estimates of conditional probability, respectively:

which enjoy all four monotonicity properties, they can be interpreted as estimates of conditional probability, respectively:

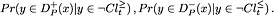

They say how far the implications

are not supported by the data.

For every  , the objects being consistent in the sense of the dominance principle with all upward and downward unions of classes are called

, the objects being consistent in the sense of the dominance principle with all upward and downward unions of classes are called  -correctly classified. For every

-correctly classified. For every  , the quality of approximation of classification

, the quality of approximation of classification  by the set of criteria

by the set of criteria  is defined as the ratio between the number of

is defined as the ratio between the number of  -correctly classified objects and the number of all the objects in the decision table. Since the objects which are

-correctly classified objects and the number of all the objects in the decision table. Since the objects which are  -correctly classified are those that do not belong to any

-correctly classified are those that do not belong to any  -boundary of unions

-boundary of unions  and

and  ,

,  , the quality of approximation of classification

, the quality of approximation of classification  by set of criteria

by set of criteria  , can be written as

, can be written as

can be seen as a measure of the quality of knowledge that can be extracted from the decision table, where

can be seen as a measure of the quality of knowledge that can be extracted from the decision table, where  is the set of criteria and

is the set of criteria and  is the considered classification.

is the considered classification.

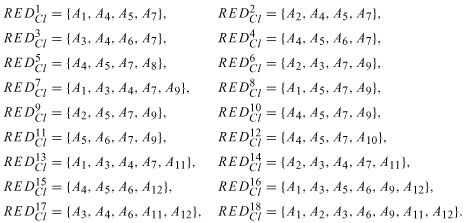

Each minimal subset  , such that

, such that  , is called a reduct of

, is called a reduct of  and is denoted by

and is denoted by  . Note that a decision table can have more than one reduct. The intersection of all reducts is called the core and is denoted by

. Note that a decision table can have more than one reduct. The intersection of all reducts is called the core and is denoted by  . Criteria from

. Criteria from  cannot be removed from the decision table without deteriorating the knowledge to be discovered. This means that in set

cannot be removed from the decision table without deteriorating the knowledge to be discovered. This means that in set  there are three categories of criteria:

there are three categories of criteria:

-

indispensable criteria included in the core,

-

exchangeable criteria included in some reducts but not in the core,

-

redundant criteria being neither indispensable nor exchangeable, thus not included in any reduct.

Note that reducts are minimal subsets of criteria conveying the relevant knowledge contained in the decision table. This knowledge is relevant for the explanation of patterns in a given decision table but not necessarily for prediction.

It has been shown in (Greco, Matarazzo & Slowinski, 2001d) that the quality of classification satisfies properties of set functions which are called fuzzy measures. For this reason, we can use the quality of classification for the calculation of indices which measure the relevance of particular attributes and/or criteria, in addition to the strength of interactions between them. The useful indices are: the value index and interaction indices of Shapley and Banzhaf; the interaction indices of Murofushi-Soneda and Roubens; and the Möbius representation. All these indices can help to assess the interaction between the considered criteria, and can help to choose the best reduct.

4.3 Stochastic dominance-based rough set approach

From a probabilistic point of view, the assignment of object  to "at least" class

to "at least" class  can be made with probability

can be made with probability  , where

, where  is classification decision for

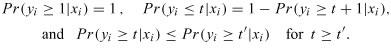

is classification decision for  . This probability is supposed to satisfy the usual axioms of probability:

. This probability is supposed to satisfy the usual axioms of probability:

These probabilities are unknown but can be estimated from data.

For each class  , we have a binary problem of estimating the conditional probabilities

, we have a binary problem of estimating the conditional probabilities  ,

,  . It can be solved by isotonic regression (Kotlowski, Dembczynski, Greco & Slowinski, 2008). Let

. It can be solved by isotonic regression (Kotlowski, Dembczynski, Greco & Slowinski, 2008). Let  if

if  , otherwise

, otherwise  . Let also

. Let also  be the estimate of the probability

be the estimate of the probability  . Then, choose estimates

. Then, choose estimates  which minimize the squared distance to the class assignment

which minimize the squared distance to the class assignment  , subject to the monotonicity constraints:

, subject to the monotonicity constraints:

where  means that

means that  dominates

dominates  .

.

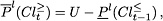

Then, stochastic  -lower approximations for classes "at least

-lower approximations for classes "at least  " and "at most

" and "at most  " can be defined as:

" can be defined as:

Replacing the unknown probabilities  ,

,  , by their estimates

, by their estimates  obtained from isotonic regression, we get:

obtained from isotonic regression, we get:

where parameter

Solving isotonic regression requires  time, but a good heuristic needs only

time, but a good heuristic needs only  .

.

In fact, as shown in (Kotlowski, Dembczynski, Greco & Slowinski, 2008), we don't really need to know the probability estimates to obtain stochastic lower approximations. We only need to know for which object  ,

,  and for which

and for which  ,

,  . This can be found by solving a linear programming (reassignment) problem.

. This can be found by solving a linear programming (reassignment) problem.

As before,  if

if  , otherwise

, otherwise  . Let

. Let  be the decision variable which determines a new class assignment for object

be the decision variable which determines a new class assignment for object  . Then, reassign objects from union of classes indicated by

. Then, reassign objects from union of classes indicated by  to union of classes indicated by

to union of classes indicated by  , such that the new class assignments are consistent with the dominance principle, where

, such that the new class assignments are consistent with the dominance principle, where  results from solving the following linear programming problem:

results from solving the following linear programming problem:

where  are arbitrary positive weights and

are arbitrary positive weights and  means that

means that  dominates

dominates  .

.

Due to unimodularity of the constraint matrix, the optimal solution of this linear programming problem is always integer, i.e.  . For all objects consistent with the dominance principle,

. For all objects consistent with the dominance principle,  . If we set

. If we set  and

and  , then the optimal solution

, then the optimal solution  satisfies:

satisfies:  . If we set

. If we set  and

and  , then the optimal solution

, then the optimal solution  satisfies:

satisfies:  .

.

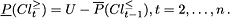

For each  , solving the reassignment problem twice, we can obtain the lower approximations

, solving the reassignment problem twice, we can obtain the lower approximations  ,

,  , without knowing the probability estimates!

, without knowing the probability estimates!

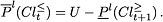

4.4 Induction of decision rules

Using the terms of knowledge discovery, the dominance-based rough approximations of upward and downward unions of classes are applied on the data set in the pre-processing stage. In result of this stage, the data are structured in a way facilitating induction of "if..., then..." decision rules with a guaranteed consistency level. For a given upward or downward union of classes,  or

or  , the decision rules induced under a hypothesis that objects belonging to

, the decision rules induced under a hypothesis that objects belonging to  or

or  are positive and all the others are negative, suggests an assignment to "class

are positive and all the others are negative, suggests an assignment to "class  or better", or to "class

or better", or to "class  or worse", respectively. On the other hand, the decision rules induced under a hypothesis that objects belonging to the intersection

or worse", respectively. On the other hand, the decision rules induced under a hypothesis that objects belonging to the intersection  are positive and all the others are negative, are suggesting an assignment to some classes between

are positive and all the others are negative, are suggesting an assignment to some classes between  and

and  .

.

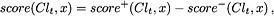

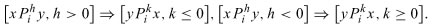

In the case of preference ordered data it is meaningful to consider the following five types of decision rules:

1) Certain

-decision rules. These provide lower profile descriptions for objects belonging to

without ambiguity: if

and

and...

, then

, where for each

, "

" means "

is at least as good as

";

2) Possible

-decision rules. Such rules provide lower profile descriptions for objectsbelonging to

with or without any ambiguity: if

and

and...

, then

possibly belongs to

;

3) Certain

-decision rules. These give upper profile descriptions for objects belonging to

without ambiguity: if

and

and...

, then

, where for each

, "

, means "

is at most as good as

";

4) Possible

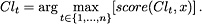

-decision rules. These provide upper profile descriptions for objects belonging to